The Challenge & Our Mission :

iRAISE is a global initiative to ensure that AI supports the healthy cognitive and emotional development of children. Led by everyone.AI and the Paris Peace Forum, it brings together researchers, industry, governments, and civil society to design AI with young people in mind—right from the start.

Grounded in neuroscience and child development, iRAISE fosters open, collaborative spaces where knowledge is shared, beneficial AI products are shaped, standards are developed, and policies are guided. Our mission is to build digital environments that are beneficial, inclusive, and truly supportive of children’s development.

This is a shared mission—for all who believe AI should grow for children, not at their expense.

A Global Initiative for iRAISE Alliance

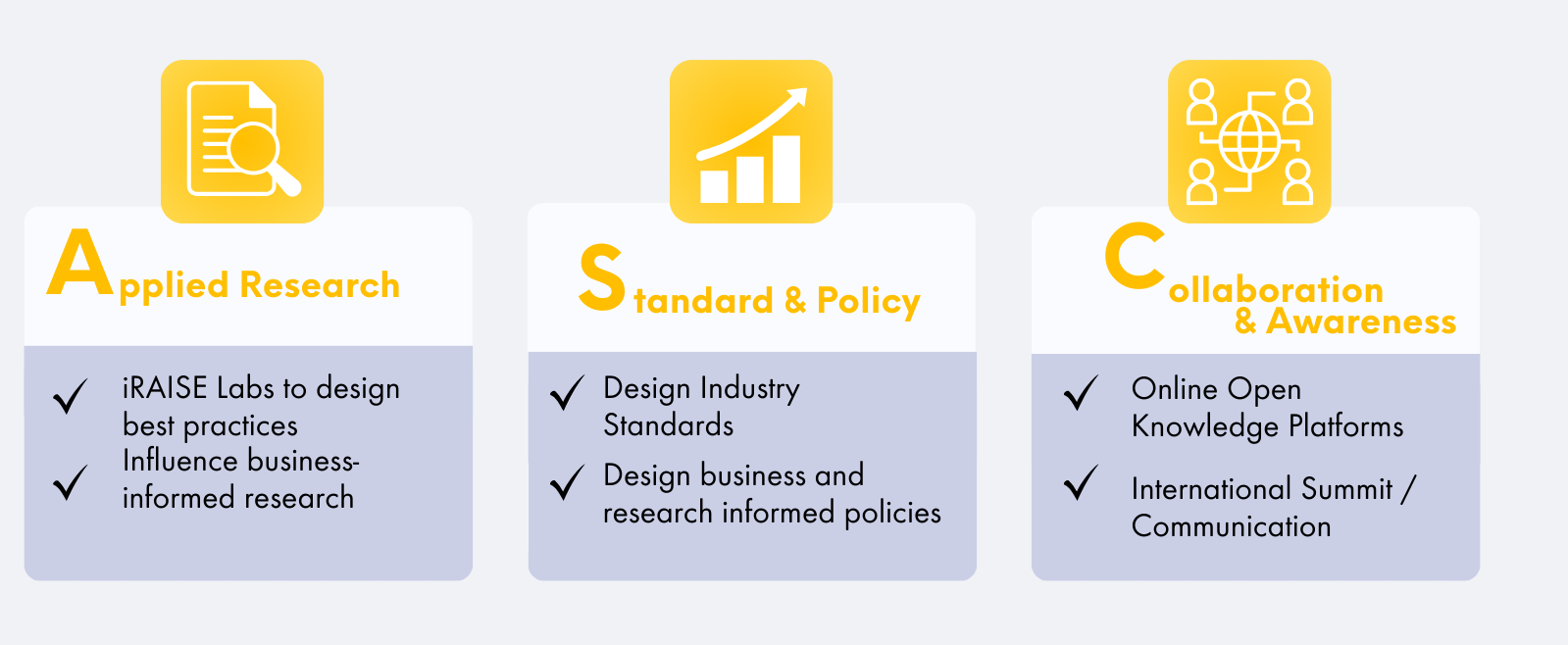

3 Pillars

The Coalition's Participants :

Governments

Companies

Innovative Startups

Labs / University

NGOs

Youth Voices NGO

Global Organizations supporting the work of the coalition

Experts & Researchers

AI Experts:

Pr. Stuart Russell, Professor of Computer Science, Cognitive Science, and Computational Precision Health, UC Berkeley, UCSF (US)

Dr. Luc Julia, Chief Scientific Officer Renault Group

Gregory Renard, Head Applied ML, Board President of everyone.AI

Pr. Laurence Devillers, Researcher at CRNS, President of the Blaise Pascal Foundation, Author, Sorbonne

Human Sciences Experts:

Dr. Sara Grimes, Wolfe Chair in Scientific and Technological Literacy and Professor in Communication Studies, McGill University

Dr. Mathilde Cerioli, Chief Scientific Officer everyone.AI

Dr. Maxime Derian, Research Associate at the C²DH – Digital Anthropology & Environmental History, Université du Luxembourg

Pr. Isabelle Hau, Executive Director, Stanford Accelerator for Learning, and Author of “Love to Learn”(US)

Dr. Jeff Hancock, Director Social Media Lab Stanford University

Dr. Jodi Halpern, Ph.D, Chancellor’s Chair, UC Berkeley

Dr. Mizuko Ito, Ph.D, Director Connected Learning Lab and Professor in Residence, University of California – Irvine

Dr. Amin Marei, Ph.D, Lecturer at Harvard Graduate School of Education

Dr. Caroline Lancelot Miltgen, Ph.D, Professor Audencia

Eglė Celiesienė, Research Fellow, Lithuanian College of Democracy

Policy Experts:

David Harris, Chancellor’s Public Scholar, UC Berkeley

Pr. Florence G’Sell, Visiting Professor Private Law, Stanford Cyber Policy Center

Dr. Teddy Nalubega, Head of AI Division, Knowledge Consulting Limited

Dr. Vera Radeva, Ph.D Lecturer at Sciences Po Paris, Affiliated PhD at CERI

Call for Action, Multi-Stakeholder Coalition for Beneficial AI in Childhood Development

AI is rapidly reshaping our world and how we interact with our environment. For children, however, those changes present unique challenges, as their brains are still developing. From birth to around 25 years of age, the brain experiences sensitive periods when learning is at its peak for specific skills, making it exceptionally adaptable but also leaving it particularly vulnerable in inadequately stimulating environments. AI holds significant potential in this context—it can either enhance development, notably through educational tools, or hinder it by reshaping the experiences that influence how children engage with the world and those around them.

To ensure AI supports rather than disrupts development, we must integrate deep human insight with technical innovation. AI can foster cognitive and socio-emotional growth, but only when it is designed with a deep understanding of human functioning. Unlocking its potential while minimizing harm requires building transdisciplinary collaborations across all stakeholders—including governments, international organizations, tech companies, investors, researchers, civil society representatives – NGOs, educators, families —to ensure the development, deployment and adoption of beneficial AI for children.

Everyone.ai and the Paris Peace Forum are co-launching a multistakeholder international coalition at the Paris AI Action Summit.

Launched during the AI Action Summit

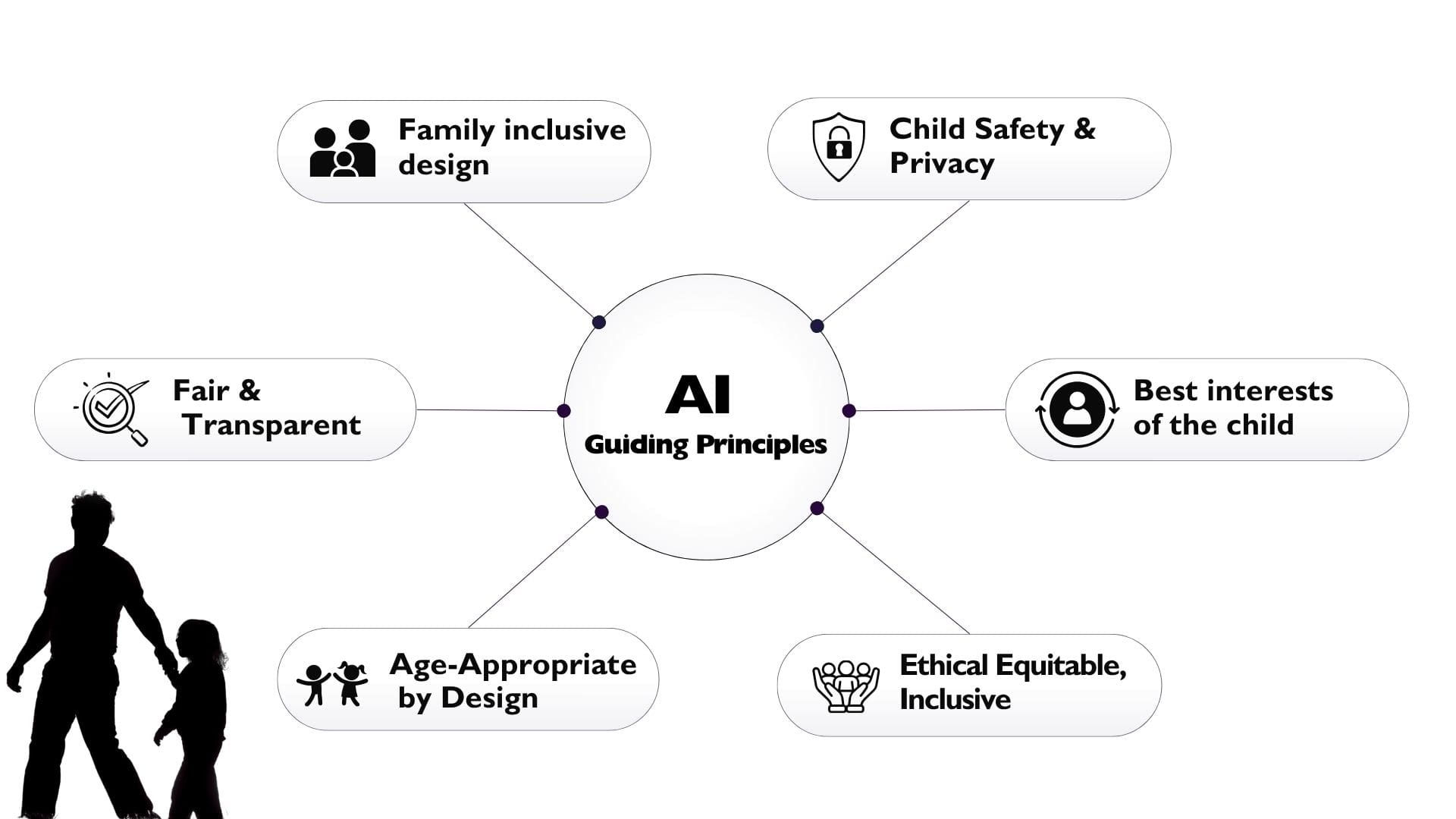

The Guiding Principles:

Objectives and Actions:

1. Establish, through continuous and structured dialogue including all stakeholders, shared guidelines that can support evaluation and mitigation of negative impact of AI products used by children. This work will be ongoing and evolve as our understanding improves. It will promote responsible AI development and deployment, prioritizing child safety, wellbeing and perspectives while allowing for equitable opportunities for all actors.

2. Leverage scientific evidence for systematic benefit and understanding of risk for AI products, and encourage long-term studies on the developmental, psychological, and societal impacts of AI on children.

3. Create a collaborative network of experts to provide ongoing guidance through dedicated committees and consultations, facilitating informed decision-making, fostering innovation, and enabling AI design and deployment to be aligned with children’s best interests, developmental needs and rights.

4. Facilitate transdisciplinary international collaborations among all stakeholders while acknowledging each industry’s unique strengths and challenges.

5. Promote AI education and literacy by developing comprehensive guidelines, pedagogical practices and educational programs that empower product developers, educators, parents, caregivers and children with the knowledge and tools to navigate and apply AI safely, responsibly and effectively.

Join the Movement!

Is your Government, Company, or NGO interested in making an impact? Join the Coalition and collaborate with like-minded partners to drive meaningful change. For more information, contact Anne-Sophie at annesophie@everyone.ai.

David Evan Harris

David Evan Harris